Adaptive marking with Numbas

Christian Lawson-Perfect, Newcastle University. @NclNumbas

Hi

Christian Lawson-Perfect,

School of Mathematics and Statistics,

Newcastle University.

I've been working on Numbas as its lead developer since 2011.

What is Numbas?

Numbas is an open-source system developed by the e-Learning Unit of Newcastle University's School of Maths and Stats, based on many years of use, experience and research into e-assessment.

It's aimed at numerate disciplines.

It creates SCORM-compliant exams which run entirely in the browser, compatible with VLEs such as Blackboard and Moodle.

History

Numbas follows the CALM model.

At Newcastle, we used the commercial system i-assess for six years before switching to Numbas.

Development began in 2011 with the aim of replacing i-assess.

Design goals

- Scalable, reliable and accessible to a broad range of users.

- Good at maths, but usable for other subjects too.

- Good-looking and easy for students to use.

- Used by question authors who aren't experts.

- Emphasis on creating rich formative e-assessment and learning materials.

- Feedback an important part of the design and implementation.

Usability

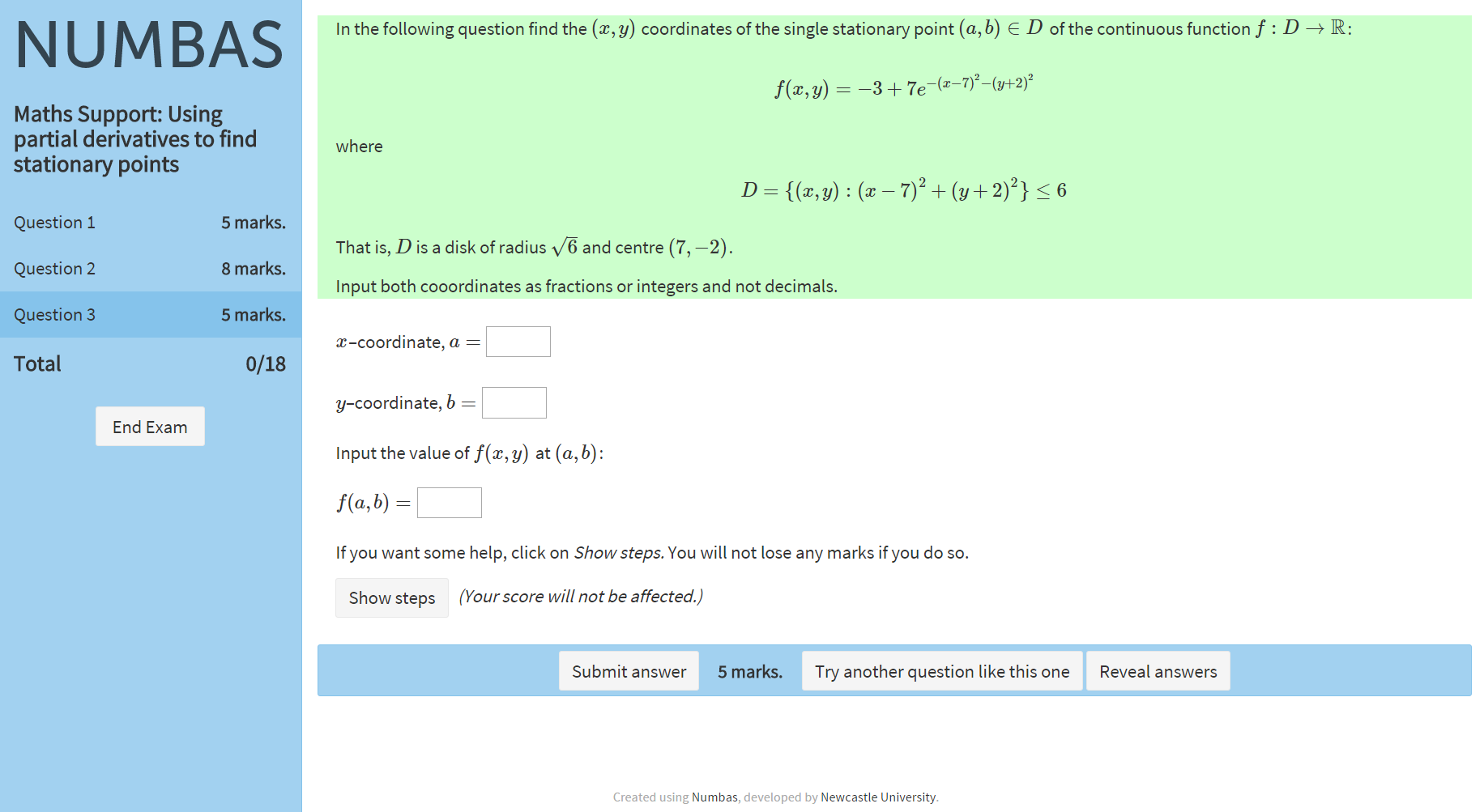

For students

The interface is clean, and accessible by screen readers. Feedback is immediate.

Tests run on any device - desktop, tablet, mobile - with a responsive design.

Over several years, and based on student feedback, the interface has improved.

Usability

For authors

Using the graphical editor, you can create a simple question with no previous knowledge.

Gradually add more complicated elements, such as LaTeX maths notation.

Can test run questions immediately.

Template questions make customising existing material easier.

Integration with a VLE

Numbas uses the SCORM 2004 standard to integrate with compliant VLEs, such as Moodle and Blackboard.

Or you can use it without a VLE.

Customisation

A large driver for Numbas was the lack of customisability in previous systems.

Interface and logic are completely separated in Numbas - custom themes can change the look of tests, or reimagine how they're run.

Extensions allow the addition of new functions, data types, and resources.

Formative vs summative use

Computer-aided assessment is great for formative assessment.

Students can try randomised questions over and over until they're happy.

Summative assessment poses problems:

- How to prevent cheating?

- Can you ask the right sort of questions for a high-stakes summative exam?

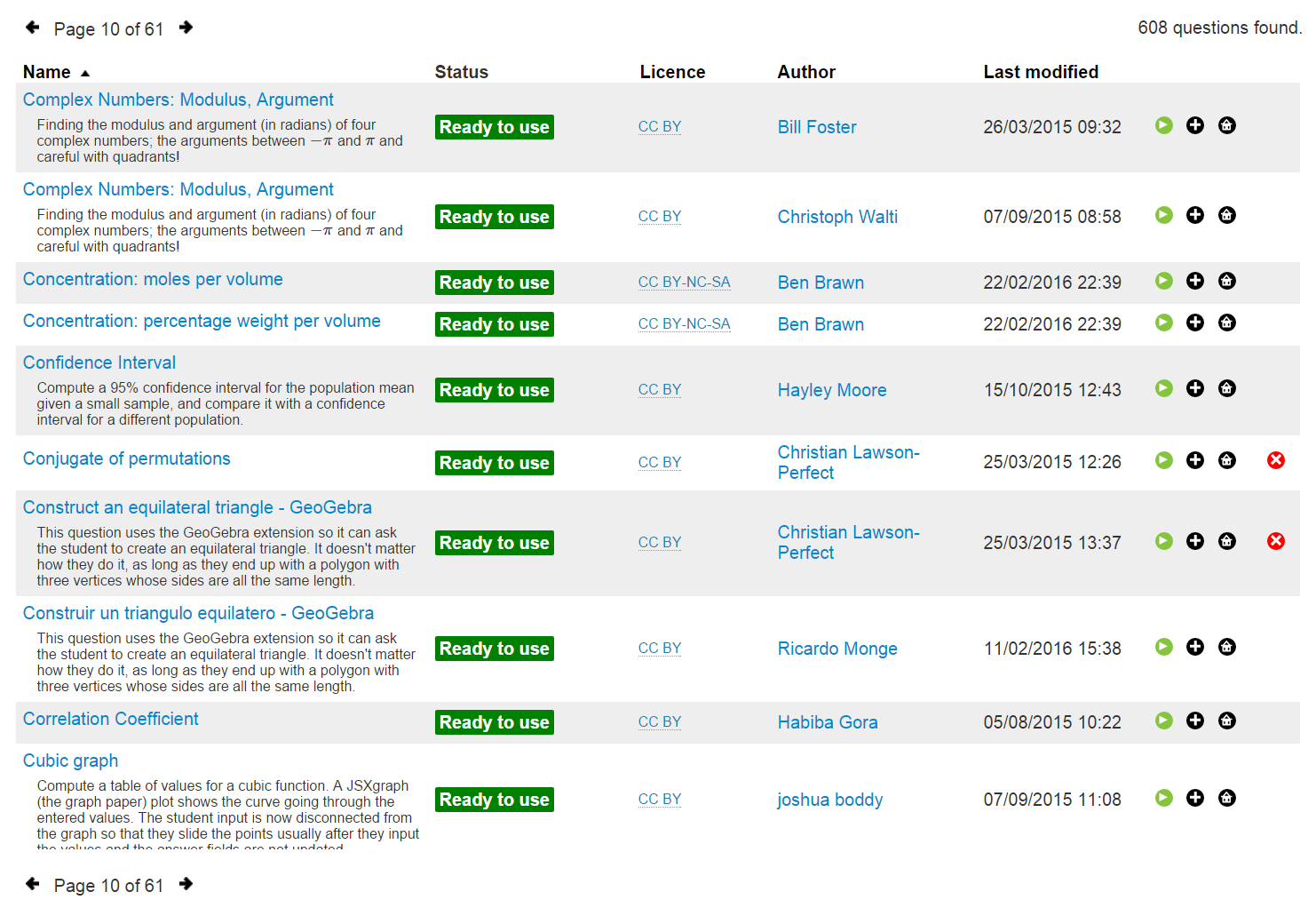

Open access

Compiled Numbas tests are SCORM packages: they're completely self-contained. Perfect for open access resources.

We want to establish a community of authors and users producing quality open-access material.

Complexity of questions

Most high school or undergrad maths questions are easily implemented in Numbas.

Randomising questions can be hard, and marking can be hard - but Numbas makes it easier.

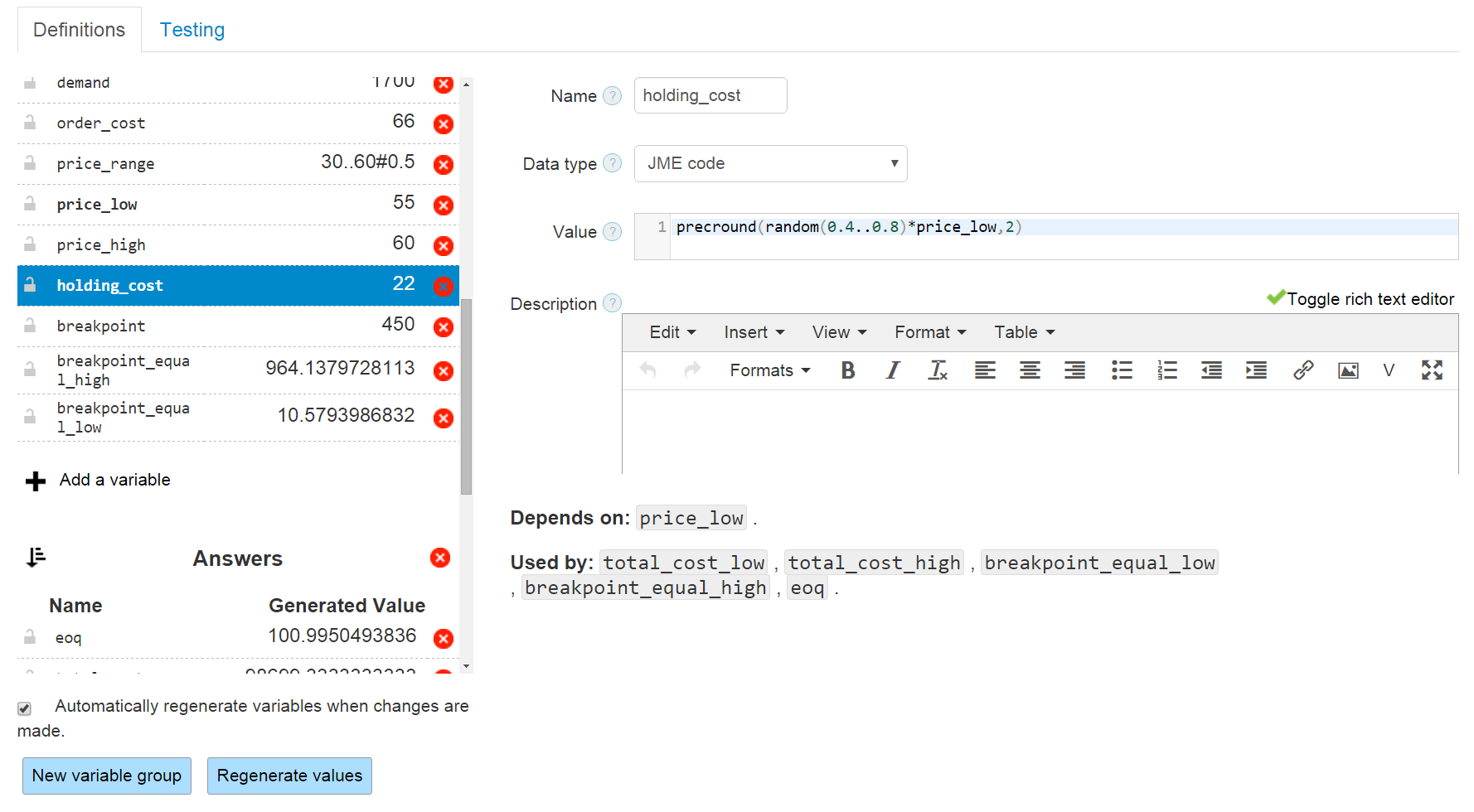

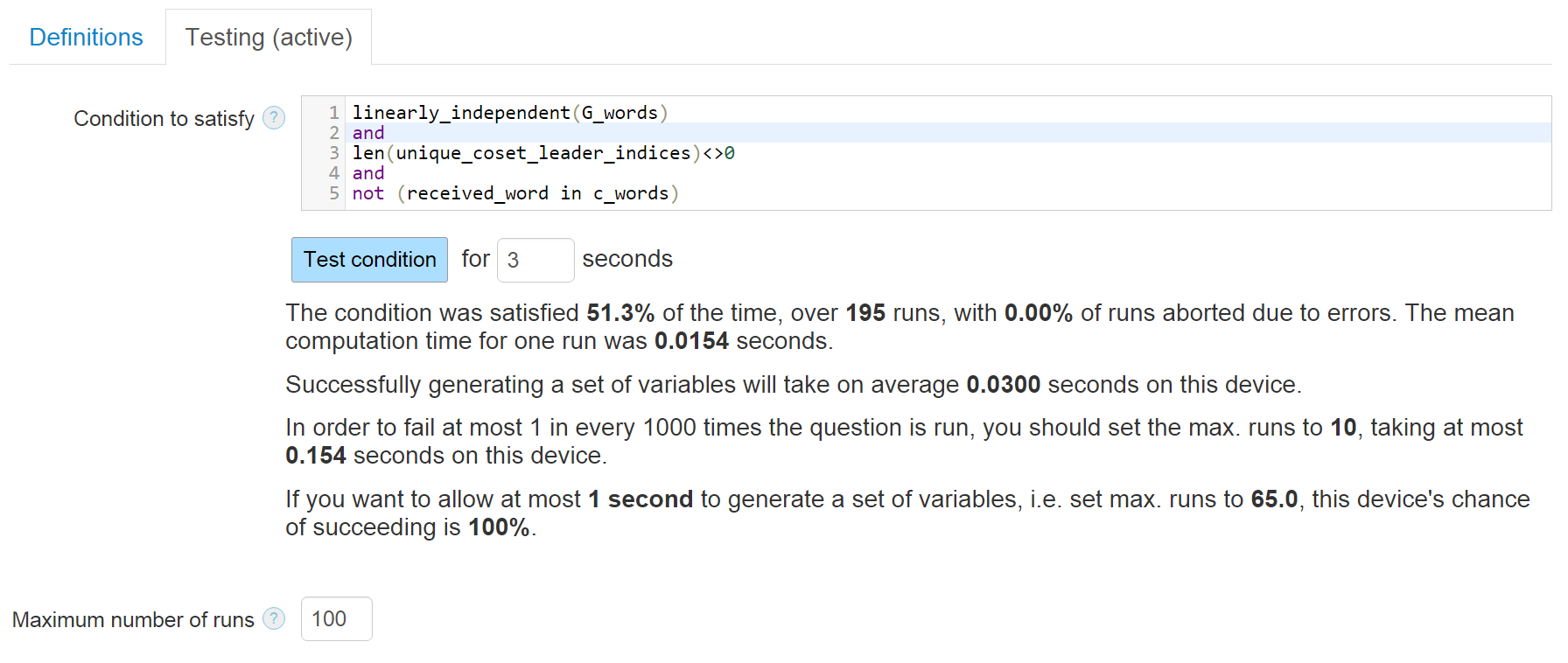

Variable generation

Variables are generated declaratively; variables can build on other variables.

The definition interface allows you to work interactively: see generated values immediately, and test for properties.

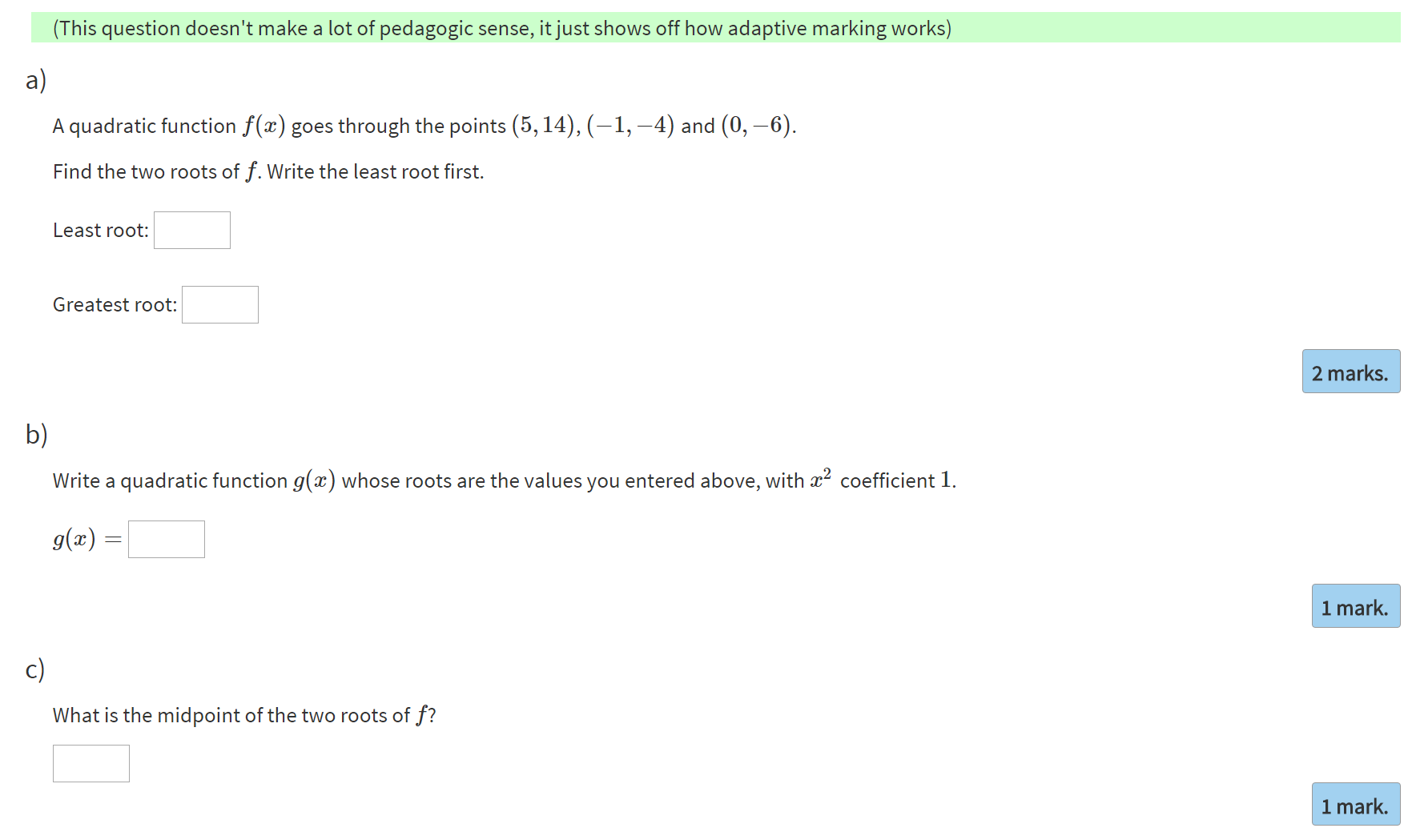

Adaptive marking

Students kept asking for error-carried-forward marking.

This is complicated to implement, and doubly so when questions are randomised.

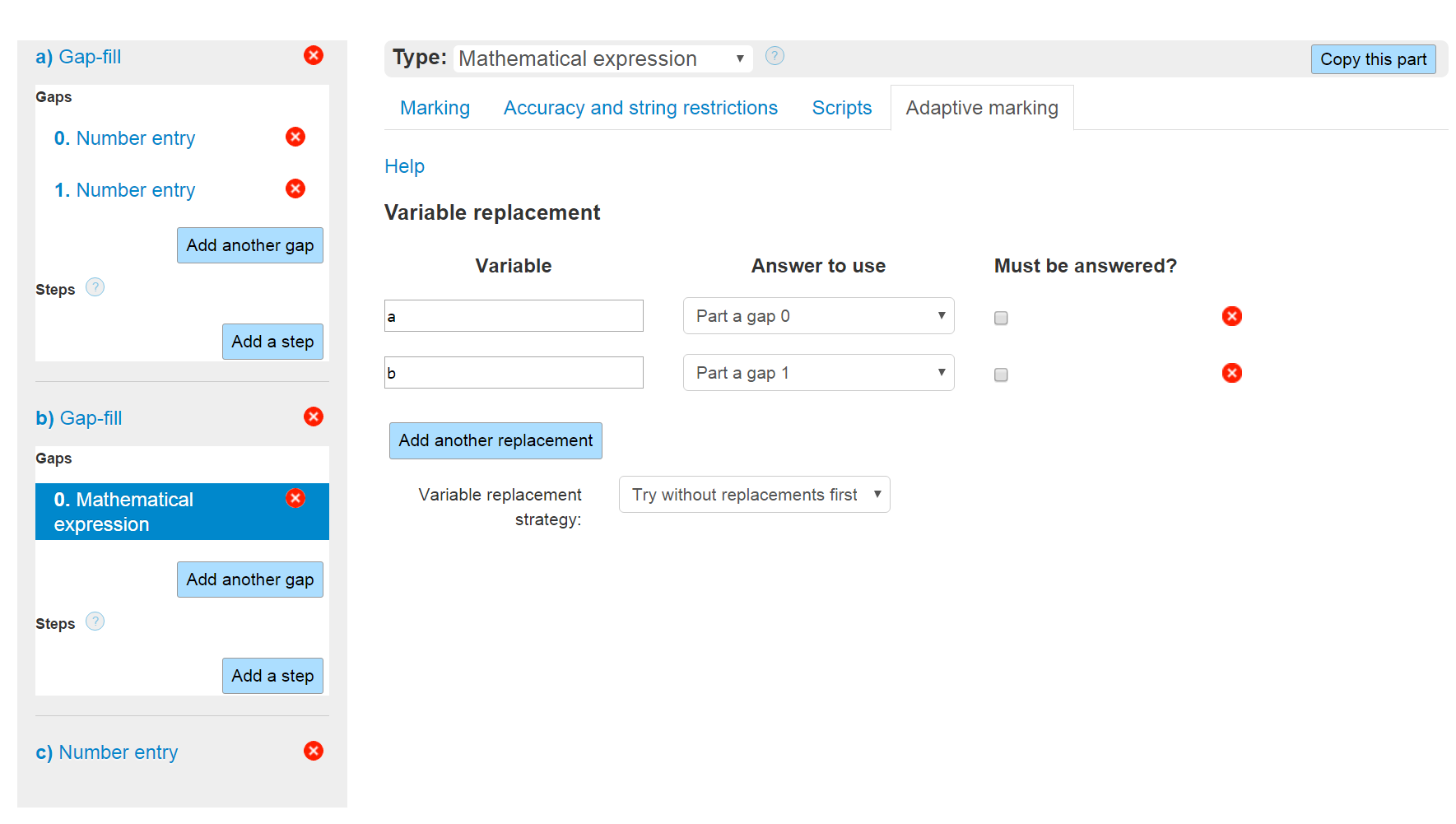

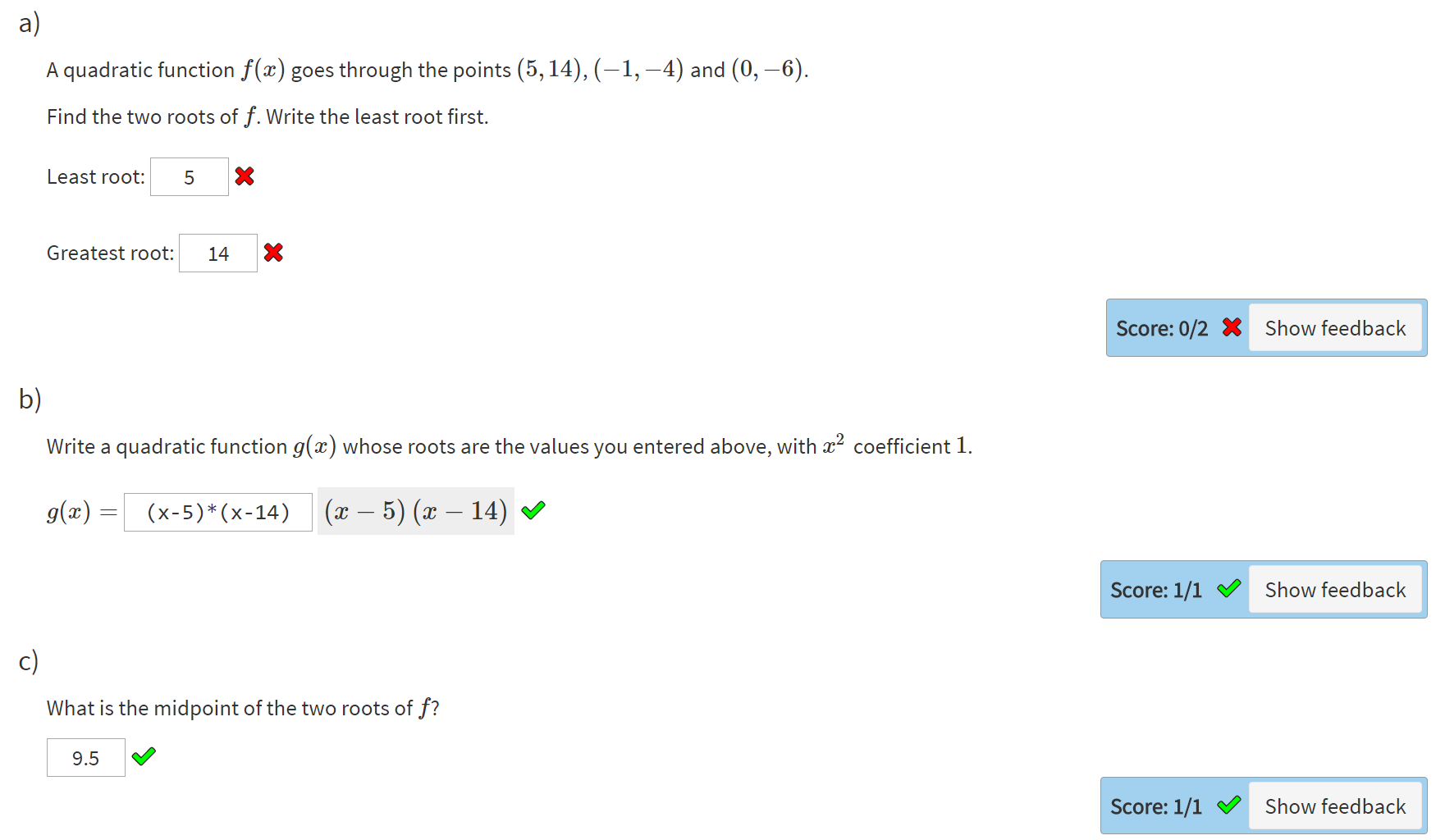

Adaptive marking

Solution: when marking a part, replace some question variable(s) with the student's answers to previous parts.

A new "correct" answer is automatically calculated.

It works surprisingly well!

Demo

Thanks!

Website

Contact

- Email: numbas@ncl.ac.uk

- Twitter: @NclNumbas